Escaping Flatland

STEM vs. Humanities Training

At Brown, I taught a course called Algorithms for the People. When I introduced the course in 2019, I didn’t have a plan or specific goal. I just felt that I needed to expose our students to some of the harms of the technologies that they might be asked to build in the future. Not to prescribe particular choices, but so that they would have the opportunity to think about these things in advance and form their own analyses.

So I put a syllabus together. But there were no technical homeworks, no book, no programming, no algorithms, no proofs. The readings came mostly from investigative journalism, the homeworks consisted of going through the readings and providing questions, and lectures were discussion-based. So basically, there was no computer science.

I ran the syllabus by a few people in the department and they basically told me: this looks great! do it!. So I did and it was the best experience I had teaching a course. And I think/hope the students got a lot out of it as well. The students engaged deeply with the material, the discussions were great and I think the course worked even if the goal was and remains ambiguous.

But afterward, I thought a lot about why it worked. And I think a big part of the answer is Brown’s open curriculum. Because Brown has no real distribution requirements1 many of our computer science students had spent years taking anthropology, theater, philosophy and political science alongside their CS courses. So they had already been trained to reason about ambiguous, contested and messy problems. My course didn’t have to build that capacity from scratch; it was already there.

But then I wondered what would this course have looked like in a department with a standard CS curriculum, where students had spent four years trying to satisfy the CS distribution, the Science or Engineering distribution on top the of the University distribution? I get the value of distributional requirements, but I don’t think these more standard curriculums would have given the students the opportunity to develop the habits of mind that a course like mine needed.

The Problem with the STEM Curriculum

I’m grateful for the computer science education I received. But, given my personal experience and my 20 years working and interacting with other computer scientists, I don’t think the computer science and, more broadly, the traditional STEM curriculum is adequate for the current times.

The traditional curriculum either forces students to---or lets them get away with---taking mostly STEM courses: math, physics, CS, and so on. This makes sense when your goal is to produce engineers who specialize in building and maintaining technical systems. But there are several problems with this.

The first, and most well-known, is that these students will be very narrow in their interests and skillsets. This point has been made many times before and, I believe, is not particularly controversial. And this is a serious issue given how much influence technology now has over people’s lives.

The more subtle issue, however, is that I think STEM education trains your brain in a very particular way. Others, like Kalev Leetaru, have pointed in this direction and argued that computer science reduces the world to numbers while the humanities teach us how much those numbers fail to capture. I think that’s right, but I want to push the point further.

The problem isn’t just that STEM-trained people don’t value the humanities. I am not a cognitive scientist or education expert so I may be completely wrong, but my impression is that a rigorous STEM education turns you into someone who can solve really difficult problems but only if those problems are well-posed. Well-posed problems are problems that have a unique solution and that behave predictably (i.e., change the input a little and the answer changes only a little).

So I think the problem with STEM education goes deeper than leaving gaps in your knowledge or leaving you indifferent to non-STEM fields. The deeper issue is that it reshapes your cognitive habits. It trains you to formalize, to reduce and to solve. And after years of this training, this becomes your default way to reason about every problem. Your brain learns to simplify and “flatten” problems before really understanding them.

For the STEM-minded, think of it this way. Every time you formalize a messy real-world problem you are projecting it down to a lower-dimensional space so that you can make it tractable. This is a legitimate and powerful thing to do; it is how we model the world so that we can build bridges, design chips and prove theorems. But after years of this training, the projection step becomes invisible. It’s not a choice anymore, it’s a habit and you lose the ability to perceive all the dimensions of a problem because you have been trained to discard most of them.

It’s like studying the shadow of a three-dimensional object cast on a wall. The shadow is real, it has structure, it can be measured and and reasoned about rigorously. But there are a lot of things the shadow doesn’t capture and if all you’ve ever studied is shadows, you won’t even know to ask about them.

This might have been fine when computer scientists were mostly working on operating systems, databases, cryptography, networking etc. But those days are gone and we now work on technologies that interact with people, that impact people’s lives, jobs, educations, health etc. And these social problems are anything but well-posed! They are not clearly stated, they are very ambiguous and they don’t have unique solutions. They are messy, complex, fuzzy and contextual.

So my contention is that the traditional STEM education is inadequate for what we need from our computer science students. Not only because it leaves gaps in students knowledge but because it trains them with the wrong mental habits.

Why the Humanities can Help

So if STEM training is not enough; then what is?

I think an education in the humanities is what’s needed. And I want to be precise about what I mean here. I am not saying that computer science students need to know more history or philosophy; again, I think this point has been made. I’m arguing that the humanities train a fundamentally different kind of reasoning and that this training is what’s missing.

My mother is a historian and this shaped me before I started coding or writing proofs. Growing up, I watched her analyze everything (e.g., the news, movies, everyday events) through a historical and sociological lens. She didn’t just watch the news; she could see whose story was being told, point out what was being left out and what historical patterns were repeating. That was just how she processed the world. I was also lucky to get a strong humanities education early on, where I spent years having to write highly analytical essays and sitting with texts that didn’t have clean answers. So I’ve seen both sides of this, and I think that background has shaped how I see the limitations of a purely technical education in ways that are hard to notice if you’ve only ever been inside STEM.

What a strong humanities education does, as far as I can tell, is train you to operate in exactly the situations where STEM leaves you helpless: the situations that are ambiguous, whose meaning is contested and that don’t have clean solutions.

In a literature course, you learn that a text can have multiple valid readings and that the interesting work is not in finding the answer but in constructing and defending an interpretation.2 In a history course, you learn that the “obvious” narrative is usually the one that was told by those in power and that there are usually other stories that were suppressed, ignored or just not recorded. In philosophy, you learn to question your own assumptions and to recognize that even your definitions are choices with consequences.

Where STEM training teaches you to flatten a problem until it’s solvable, humanities training teaches you to keep the full problem in sight even when that makes reasoning about it harder.

And these are not just “soft skills”; as we sometimes refer to them. They are a different kind of rigor. A mathematician writes the proof of a theorem and a historian argues that a given interpretation of events is more defensible than another while acknowledging that the evidence is incomplete and that other interpretations have merit. Both endeavors are hard and both are rigorous but in different ways. And the latter is the one that I think maps to understanding the problems and harms that technology creates.

The Blind Spot

I want to stress that I am not saying that STEM-trained people are not capable of reasoning about complex matters. Many of us do---or at least try. And I am certainly not saying we should replace STEM education with humanities education. We obviously need people who can build things, who understand algorithms, systems and math. STEM training is great for the problems it’s designed to address.

What I am saying is that the gap is structural, not personal. It’s not that computer scientists lack curiosity or have bad intentions. It’s that the training we receive, when taken to an extreme and without a counterweight, narrows what we can perceive and how we reason about it. If you spend your entire education learning to solve well-posed problems you will, through no fault of your own, tend to turn every problem you encounter into a well-posed one. You will formalize it, define an objective function, optimize it and declare victory. And many times that is exactly the right thing to do. But when we train students exclusively this way, we are sacrificing something that is increasingly valuable.

Closing Thoughts

The humanities-trained person might not be able to solve the flattened version of a problem as effectively as we can, but they can see aspects of it that our training has made us blind to. And when you are building systems that affect people’s lives, everything matters. That is the blind spot. It’s not a lack of ability or good intentions, but a curriculum-induced inability to even perceive the dimensions of a problem that resist formalization. And you can’t reason properly about what you can’t see.

Epilogue

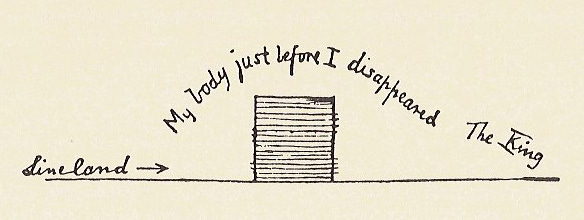

In 1884, Edwin Abbott Abbott published Flatland: A Romance of Many Dimensions, a novella about a square living in a two-dimensional world. The square can move left and right, forward and back. It can reason rigorously about its world. It is, by every measure available to it, competent. But it has no concept of up. When a sphere passes through Flatland, the square sees only a circle that appears from nowhere, grows, shrinks and vanishes. It can describe what it sees with precision. It just can’t understand what it’s looking at.

The square’s problem is not a lack of ability. It is a lack of dimensions. And no amount of rigorous two-dimensional reasoning will give it the third one. Someone has to show it that up exists.

Escaping Flatland is also the name of a popular substack by Henrik Karlsson.

I am simplifying things a bit because the CS department itself has distributional requirements; but the goal of this post is not to argue about the intricacies and nuances of the Brown undergraduate curriculum.

This may sound obvious, but there is a difference between knowing this in theory and seeing different---unexpected---analyses and having to defend your perspective in real life.